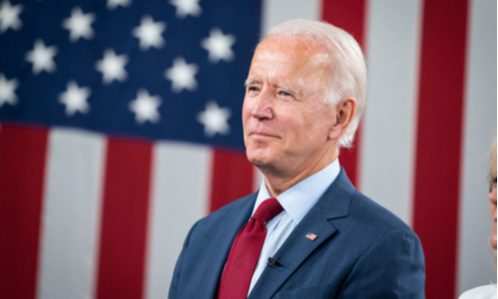

In response to President Biden’s October executive order on artificial intelligence (AI), the Biden administration announced on Tuesday its first steps toward establishing essential standards and guidance for the secure deployment of generative AI. The initiative, led by the Commerce Department’s National Institute of Standards and Technology (NIST), aims to shape industry standards around AI safety, security, and trust.

Commerce Secretary Gina Raimondo emphasized the importance of developing guidelines that would position America as a leader in the responsible development and use of rapidly evolving AI technology. The effort invites public input until February 2, with a focus on crucial testing methods essential for ensuring the safety of AI systems.

NIST’s undertaking involves creating comprehensive guidelines for evaluating AI, facilitating the development of industry standards, and establishing testing environments for AI systems. The agency’s request for input extends to both AI companies and the public, focusing particularly on generative AI risk management and mitigating the risks associated with AI-generated misinformation.

Generative AI, capable of producing text, photos, and videos in response to open-ended prompts, has sparked both excitement and concerns in recent months. The technology’s potential to render certain jobs obsolete, influence elections, and surpass human capabilities raises significant questions about its responsible deployment.

Read more: President Biden Joins Federal Agencies Fighting Against Surprise Fees Harming American Consumers

President Biden’s executive order directed agencies to set standards for testing, addressing not only AI-related risks but also chemical, biological, radiological, nuclear, and cybersecurity risks. NIST is actively working on guidelines for testing, exploring areas where external “red-teaming” – a practice borrowed from cybersecurity – can be most beneficial for AI risk assessment and management.

The term “red-teaming” refers to a practice that has been employed for years in cybersecurity, involving simulations to identify new risks. NIST’s focus on setting best practices for red-teaming aligns with the broader goal of establishing a robust framework for the safe and responsible development of generative AI technology.

This initiative marks a significant stride toward ensuring that the deployment of AI aligns with ethical considerations, national security imperatives, and safeguards against potential risks, reflecting the administration’s commitment to staying at the forefront of AI development while prioritizing safety and responsibility.

Source: Reuters

Featured News

UK Government Approves Vodafone-Hutchison Merger

May 9, 2024 by

CPI

Senate Majority Leader Announces Plan for AI Regulation Framework

May 9, 2024 by

CPI

BBVA Initiates Aggressive Takeover Bid for Sabadell

May 9, 2024 by

CPI

TikTok to Label AI-Generated Content Amid Election Interference Concerns

May 9, 2024 by

CPI

Italy’s Antitrust Authority Imposes Heavy Fines on Car Rental Giants

May 9, 2024 by

CPI

Antitrust Mix by CPI

Antitrust Chronicle® – Ecosystems

May 9, 2024 by

CPI

Mapping Antitrust onto Digital Ecosystems

May 9, 2024 by

CPI

Ecosystems and Competition Law: A Law and Political Economy Approach

May 9, 2024 by

CPI

Ecosystem Theories of Harm: What is Beyond the Buzzword?

May 9, 2024 by

CPI

Open Ecosystems: Benefits, Challenges, and Implications for Antitrust

May 9, 2024 by

CPI