How to Think About AI Regulation

Every year, for the last 16 years, economists have come to Toulouse for the Digital Economics Conference to present cutting-edge research on just about everything digital. For those who don’t know Toulouse, or only that it is the headquarters of Airbus, the La Ville Rose is a charming city in the Southwest of France, worth visiting if only to dine on cassoulet and sip on Armagnac afterward.

The sponsor of the conference, the Toulouse School of Economics, is a world-renowned graduate school and home of Nobel prize winner and two-sided market pioneer Jean Tirole.

This year, I was asked to give a talk on AI regulation. I had a great opening act. Sort of. A few weeks earlier, French President Emmanuel Macron gave a speech in Toulouse, pushing back on the EU’s grand plan to regulate AI. More on that later.

There’s been lots of talk, some action, some maybe action, and millions of words written on “regulating” Artificial Intelligence. My job was to provide some organizing principles for thinking about AI regulation. Here’s some of what I had to say.

Diffusion of AI Will Impact Most Everything Over Time

Many readers can skip over this section because you know a lot about AI, its use, and why it isn’t like crypto hype. For others, here’s a quick run-through.

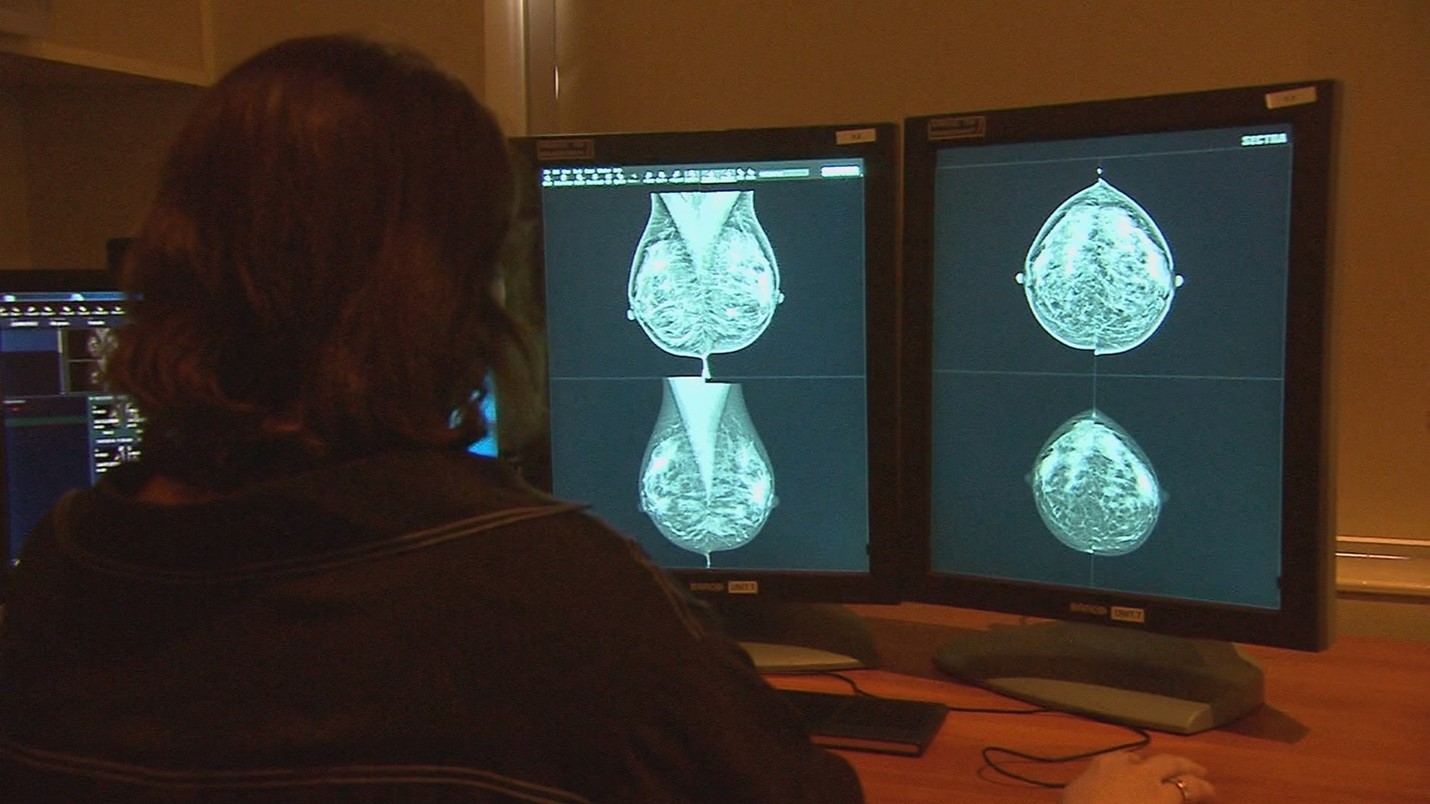

AI provides increasingly better substitutes for brainpower in an ever-growing range of useful applications. The time savings we are seeing in the early days are impressive. Two radiographers often read mammograms to reduce the likelihood of false negatives. A Swedish randomized controlled trial found that AI could replace one radiographer and detect 20 percent more breast cancer. GitHub claims that developers who use its AI-based Co-Pilot code up to 55 percent faster. DeepMind used AI to predict the structure of more than 200 million proteins, a job that would have taken graduate students a billion years of research time following traditional approaches. AI did something that just wasn’t feasible even with very smart humans.

These are just a few examples of what AI is accomplishing now and a glimmer into what it could do in the long run.

AI is what economists refer to as a general-purpose technology, such as electricity or the microchip, that can support innovation in a wide range of applications throughout the economy. (By coincidence GPT is an acronym used in both AI and economics to mean different things.)

AI has been around for decades, but the technology has improved dramatically as a result of the development of deep learning, graphic processing units and parallel processing, generative AI and large language models for producing original content from ingesting and being trained on data, convolutional neural networks for recognizing patterns in images, and more.

As critical, AI specialists have learned how to perfect the application of these technologies to productive applications (versus demonstrating AI’s prowess at playing games like chess and GO.) Foundational models are now trained by estimating parameters from massive amounts of raw and human-label data. GPT-4 has 1.7 trillion parameters.

AI technologies are going to diffuse rapidly through the economy based on an app ecosystem model with first-party and third-party apps.

There are now foundational models, such as GPT-4, Dall-E, BERT, and Stable Diffusion, that support a wide range of uses.

Killer first-party apps have already appeared based on them, such as ChatGPT based on GPT-3, and we are beginning to see third-party apps such as Jasper. Meanwhile, vertical, specialized models like GitHub Co-Pilot for writing code have been developed.

AI models are being mixed and matched so that vertical models can have a conversational front end.

Many companies are developing specialized GenAI apps to solve specific problems. A Gartner poll from last October found that 45% of 1400 executives surveyed were piloting or experimenting with GenAI, with an additional 10% already having live solutions.

AI Technologies Will Have Diverse Implications for Laws and Regulations

AI will necessarily touch many existing laws and regulations because it will impact the subjects of those in some way and require some new laws and regulations just because, well, stuff comes up. This statement isn’t meant to advocate regulation — it is just stating the obvious about a very smart general-purpose technology that will be diffused in the economy and society for decades to come in unpredictable ways.

Just the Facts, Ma’am

Fortunately, we already can deal with many AI issues.

Regulators and judges often have to make decisions by applying rules to facts. In many cases, AI and its applications will just lead to other facts to consider in applying those rules. Maybe important facts, maybe background noise, but in any event, not anything that decision-makers can’t handle.

The U.S. Food and Drug Administration (FDA), for example, approves medical devices. Low-risk ones just require notification, and high-risk ones require scientific evidence that the benefits outweigh the risks. AI technologies are now considered in this framework. The FDA will need to tap people who can help evaluate AI, but that doesn’t require any regulation changes. The FDA approved 155 devices that use AI in 2023, with 87 of those used for radiology, such as Qure.ai’s X-ray solution.

Courts also deal with product liability issues in a variety of areas, including health, and in the US can apply well-trod principles of common law to address claims that arise from the use of AI.

Of course, as we learn more about AI we may discover that new issues arise that fall through the cracks.

Keeping the Right Balance

Laws and regulations are ultimately based on policymakers making tradeoffs. They could always make the rule more or less strict so they must have a principle in mind to decide which direction to go in and where to settle down.

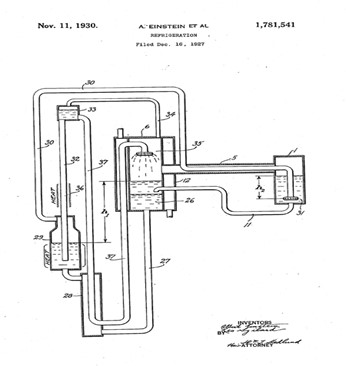

Patent law is based, for example, on considering the tradeoff between giving people the incentives to create new things and the ability of people to benefit from new things.

Patents only last so long (20 years in the U.S.) and don’t cover a lot of creations of the mind. Albert Einstein could patent his refrigerator invention, but anyone could use the ideas presented in his article on the general theory of relativity.

Artificial intelligence technologies might change the underlying tradeoffs for these laws and regulations. If they did that enough, we might consider changes to recalibrate the balance.

The current batch of complaints against foundational models by content creators concerns whether the foundational models have violated existing copyright law. Copyright law has historically provided limited protection to content. Almost every newspaper article, for example, is based, at least in part, on content generated by other media. The legal issue is whether the third party engaged in “fair use” of the content, which is OK.

The proposed class action case over the use of artwork is a good example. The judge in Sarah Andersen et al. v. Stability AI et al. largely rejected the plaintiffs’ complaint but is giving them another shot at a complaint that could pass muster.

Stability Diffusion is foundational LLM for images trained on labeled data from an open-source Laion database of around 6 billion labeled images. Apps can use Stability’s Dream Studio to draw from the model. Deviant Art is a marketplace for art from creators, some of which is generated by AI, including one by Dream Studio. Ms. Andersen, a cartoonist, claimed Stability AI was trained on hundreds of her images, which in turn were used to create artwork that appeared in the Deviant Art marketplace. Other plaintiffs claimed the same and sought to represent a class.

GenAI may be new, but this issue isn’t. Authors and publishers sued Google over ingesting copyrighted books into Google Books in 2005. A decade later, after a long winding path, a district court and then an appeals court agreed this was fair use because Google transformed the works into a valuable service that wasn’t previously available. The Supreme Court refused to hear an appeal.

The judge in the Andersen case expressed skepticism that the plaintiffs could succeed because even the plaintiffs agreed that the images on Deviant Art are likely to be different from the works in the training data. That sounds transformative.

This isn’t necessarily the end of the story for public policy towards AI and copyright. Until AI sees the day when it can match human creativity, LLMs must be trained on human content to generate intelligent output. If content creators can’t make money or get credit for their work because of GenAI, then people won’t invest in spending time being artists, writers, or musicians. Humans would benefit from less creativity, and ironically, the LLMs will become poor, too.

The courts may decide that the foundational models are engaging in fair use under existing copyright law, but legislators could then consider whether society would benefit from content creators getting more compensation from the use of their works to train AI technologies.

That said, for now, we have an existing body of law and regulations for which AI does not obviously raise novel issues. The legislature and executive branches might benefit from seeing how the courts analyze the copyright issues raised by AI before considering changing the laws already on the books.

Yikes, We Just Can’t Wait

“Deep fakes” have been around for a while, but they weren’t all that deep or easy to create, and there wasn’t a groundswell of support for imposing substantial sanctions on their creators, even when they caused harm.

AI has made it much easier to create (really) deep fakes — as in, who could tell it’s not real? This then increases personal and social costs such as fraud (impersonators), misinformation (social upheaval), and emotional distress (deep fake porn).

Wired reports that “deepfake porn videos are growing at an exponential rate, fueled by the advancement of AI technologies and an expanding deep fake ecosystem. In the U.S., almost all states have laws against revenge porn, and some are considering beefing those up as a result of deep fakes. The laws make it criminal to disseminate intimate images without the person’s consent.

As Bloomberg reports, however, there are no federal laws against creating or sharing deep fake porn, and Section 230 provides platforms with protection for being liable for content posted on their sites.

More generally, we may need new laws or regulations — and perhaps fast — that deal with the myriad of harms that could arise from the spread of tools for creating deep fakes or websites that traffic in them.

Countries Weigh the AI Regulation/AI Innovation/AI Geopolitical Tradeoffs

This Is Never a Good Idea

A month before I arrived in Toulouse, President Macron raised the alarm on overly intrusive regulation:

“We can decide to regulate much faster and much stronger than our major competitors. But we will regulate things that we no longer produce or invest. This is never a good idea.”

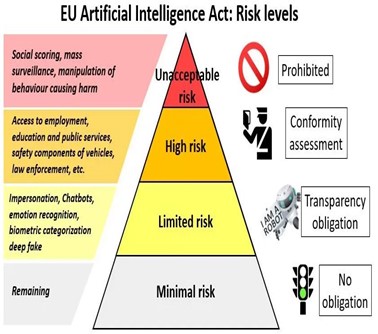

A few days that before his talk, the EU trinity — Commission, Council, and Parliament — had ratified the EU AI Act. Under the Act, “the higher the risk, the stricter the rules,” and as part of that “foundation model … must comply with specific transparency obligations before they are placed on the market.” The Council was proud that “as the first legislative proposal of its kind in the world, it can set of a global standard for AI regulation … thus promotion the European approach to tech regulation in the world stage.”

That result would be more enviable if the EU had any major AI firms. Unfortunately, as I pointed out a few weeks ago, continuing its many-decade inability to give birth to global digital tech leaders, it just didn’t have a seat at the table.

Going forward, France looked like it could change that with its deep pool of AI scientists. Home-grown Mistral, which had made rapid strides at developing a foundational model, raised more than $400 million at a $2 billion valuation. Macron was worried that the EU AI Act could put France’s domestic firms at a disadvantage.

The EU AI Act isn’t a done deal as it still has to be approved by the Council of Permanent Representatives, which consists of all the member states.

The Contours of the AI Regulator Debate

The EU debate highlights three key dimensions of the larger regulatory debate over AI technologies taking place around the world.

Technology vs. Use: Should we regulate AI as a technology — such as ex-ante regulation of foundation models — or only regulate particular applications of AI technologies, such as to generate harmful misinformation?

Regulation vs. Innovation: Should we have stricter regulation soon, given the potential risks, or less regulation for now, to promote innovation?

Regulation vs. National Competitiveness: Should we have stricter regulation soon, given the potential risks, or less regulation now, to facilitate competition with other jurisdictions?

Of course, everyone is worried about falling far behind China, and Europe is terrified they aren’t even in the race.

The Benefits of AI Loom Large

These debates are occurring at a time when countries see the immense value that AI can bring to their economies.

It isn’t just drastic innovation and placing bets on creating the next Apple. It is the value that all businesses can realize from deploying AI to reduce costs, deal with pressing labor shortages, and engage in more mundane innovation that also powers economic growth.

Sound policy demands that we account for the social benefits of AI technologies and possible harms in deciding the right balance to strike.

David S. Evans is an economist who has published several books and many articles on technology businesses, including digital and multisided platforms, including the award-winning Matchmakers: The New Economics of Multisided Platforms. He is currently the Chairman, Market Platform Dynamics and Global Leader for Digital Economy and Platform Markets at Berkeley Research Group. For more details on him, and his books and articles, go to davidsevans.org.