Smart Glasses Firm Vuzix Launches AI-Powered Facial Recognition

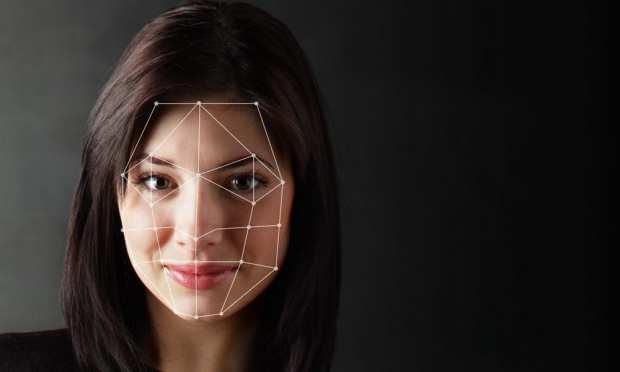

Smart glasses company Vuzix has developed eyewear with artificial intelligence (AI)-powered technology that can recognize faces in a crowd, according to a report by TechCrunch.

The technology was developed in partnership with software developer NNTC, and it will work with the company’s Blade smart glasses, which were introduced earlier this year at CES. It’s mainly meant for law enforcement, although it has some consumer applications.

The product is called the iFalcon Face Control Mobile, and Vuzix says it will match faces against a database stored on a wearable computer with a headset.

The company said the peripheral is especially helpful for “law enforcement and security guards on patrol,” and that it can locate 15 faces per frame in under a second. It can store a database of 1 million faces without needing to be connected to the cloud.

The technology works best when it knows who it’s looking for, like a criminal or a missing person. The glasses don’t tag and store new faces. The smart glasses are in use in the UAE for “security operations,” and there are about 50 in circulation currently.

San Francisco recently banned the use of facial recognition tech, even though it’s increasingly being used in airports. It will likely cover the majority of flights that depart the U.S. in the near future.

Amazon shareholders recently rejected a proposal that would disallow the sale of its own facial recognition technology to governments. At a May 22 meeting, Amazon shareholders shot down two different proposals to monitor and curb the eCommerce giant’s facial recognition service.

Amazon’s technology, called Rekognition, has been under scrutiny as critics of the service have warned of false arrests and incorrect matches. Other voices in favor of the tech have claimed the service keeps the public more safe. The technology has been used by law enforcement in Oregon and Florida.

Amazon tried to stop the voting on the two non-binding proposals, which had the support of civil liberties groups, but it was overruled by the U.S. Securities and Exchange Commission.

One of the proposals would have put forward plans to conduct a study to see how much Rekognition harms human rights and privacy. The other would have made the company stop giving the technology to governments unless it was determined that selling it didn’t violate civil liberties.